Google Launches A.I to rival Chat GPT

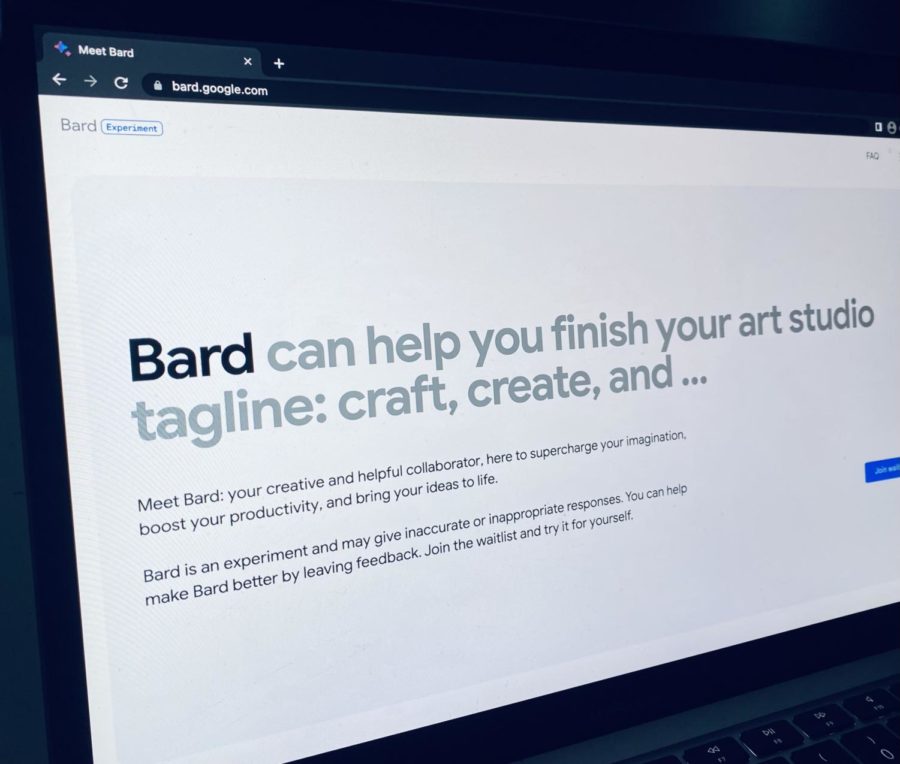

ChatGPT has taken the world by storm. Although it is a young application, only around four months old, the impact it has made both in the workforce and education is stupendous. Microsoft has launched an AI chatbot on their infamous search engine Bing, and now Google is catching up, with an AI chatbot called “Bard.”

Bard was developed by Sissie Hsiao and Eli Collins, who described their intentions for the application as a way to help people “boost their productivity, accelerate their ideas, and fuel their curiosity.” The application is currently available via waitlist in the U.S. and the U.K., with Google making no statement as to when the chatbot will be available to the public.

The emergence of Bard and other AI chatbots has caused much debate over the ethical issues raised by AI, as well as over the factual fallacies. In what seems to be addressing these concerns, Google has specified that Bard is not a replacement for a search engine, but merely a complement.

However, Bard has proved more capable than a mere complement. In a test by The Verge, a prominent technology magazine, two conclusions were reached: first, that the application was much faster than its rivals (although this might be due to the relative lack of users), and second, that it was too safe. The author of the article commented on the outrageousness and irreverence of Bing’s search engines, and lamented on how much more uncontroversial the Google chatbot was by comparison.

The desire to steer away from controversy is a major distinguishing factor of Bard. Despite this, however, Bard has already proven to be controversial. In a demo of Bard last month, an error was made by the chatbot, which led to a plummet in market value for Google’s parent company, Alphabet, by about 100 billion dollars. Innovation does not matter nearly as much as results do.

The new boost in AI has certainly accelerated ideas—and fueled curiosity. There has been a raging debate in both the academic world and other fields over the potential of new AI, with some saying it’s the future, while others, such as renowned scholar Noam Chomsky, believe it to be “high tech plagiarism.” I decided to have a conversation with the one that started it all: ChatGPT. Here’s how it went.

MC = Miles Cruz

CGPT = Chat GPT

MC: Hi Chat GPT, I’m Miles Cruz. Can I ask you a few questions for a story I’m writing for my school newspaper?

CGPT: Hello Miles Cruz! Of course, I’m here to help. Feel free to ask any questions you have, and I’ll do my best to provide you with accurate and informative answers.

MC: What do you think of the new rival AI, Bard, that Google has just launched?

CGPT: As an artificial intelligence language model, I don’t have personal opinions, but I can tell you some information about the new Google AI called “Bard.”

“Bard” is an AI language model developed by Google that aims to compete with OpenAI’s GPT-3, the same technology I was trained on. Like GPT-3, Bard is a massive neural network designed to process and generate human-like text. It is designed to help people write by offering suggestions for things like sentence structure, grammar, and vocabulary.

While Google has not released many details about Bard’s performance or capabilities, it is expected to be a powerful tool that could have a significant impact on the field of natural language processing. It will be interesting to see how Bard stacks up against other AI language models like GPT-3 and what kinds of applications it will be used for.

MC: What benefits do you think applications like yourself and Bard could have in the future? What harm are you capable of?

CGPT: AI language models like myself and Bard have the potential to provide a range of benefits in the future, including:

- Improved efficiency: AI language models can automate tasks like writing, editing, and translation, which can save time and improve productivity.

- Personalization: AI language models can generate personalized content, such as product recommendations, news articles, and social media posts, based on an individual’s preferences and interests.

- Accessibility: AI language models can improve accessibility for people with disabilities, such as those who have difficulty typing or reading.

- Innovation: AI language models can be used to create new applications and technologies, such as chatbots, virtual assistants, and voice assistants, which can improve the way we interact with technology.

However, there are also potential harms associated with AI language models, including:

- Misinformation: As I mentioned earlier, AI language models can generate misleading or false information if not properly trained or supervised.

- Bias: AI language models can perpetuate and amplify biases in data, leading to discrimination and unfairness.

- Job displacement: AI language models could lead to job displacement for certain professions, particularly those that involve writing or translation.

- Privacy and security: AI language models may collect and process personal data, raising concerns about privacy and security.

Ultimately, the benefits and harms of AI language models depend on how they are developed and deployed. It’s important to carefully consider the ethical and social implications of AI technology and work to mitigate potential harms while maximizing its benefits.

So, there you have it. The ills and greatness of AI explained by AI itself.

Your donation will support the student journalists of Dublin High School. Your contribution will allow us to purchase equipment and cover our annual website hosting costs.